Hello!

Thanks for you work on Lighter, I've been looking for a replacement for Livy for a bit and this is the closest thing on the Internet!

I'm running Lighter on a minikube Kubernetes environment. I have a Spark cluster deployed through Helm charts. I also deployed PostgreSQL through Helm since it seems like Lighter needs it. Here's how my environment looks (in the default namespace):

NAME READY STATUS RESTARTS AGE

lighter-6675c44b5b-jjpwk 1/1 Running 2 (20m ago) 16h

nfs-nfs-server-provisioner-0 1/1 Running 1 (21m ago) 16h

postgres-postgresql-0 1/1 Running 1 (21m ago) 16h

spark-data-pod 1/1 Running 1 (21m ago) 16h

spark-master-0 1/1 Running 1 (21m ago) 16h

spark-worker-0 1/1 Running 1 (21m ago) 16h

spark-worker-1 1/1 Running 1 (21m ago) 16h

spark-worker-2 1/1 Running 1 (21m ago) 16h

Based on your instructions, I did:

- Manifest for: ServiceAccount, Role, and RoleBinding

apiVersion: v1

kind: ServiceAccount

metadata:

name: spark

namespace: default

---

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: lighter-spark

namespace: default

rules:

- apiGroups: [""]

resources: ["pods", "services", "configmaps", "pods/log"]

verbs: ["*"]

---

kind: RoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: lighter-spark

namespace: default

subjects:

- kind: ServiceAccount

name: spark

namespace: default

roleRef:

kind: Role

name: lighter-spark

apiGroup: rbac.authorization.k8s.io

- Manifest for: Lighter Service

apiVersion: v1

kind: Service

metadata:

name: lighter

namespace: default

labels:

run: lighter

spec:

ports:

- name: api

port: 8080

protocol: TCP

- name: javagw

port: 25333

protocol: TCP

selector:

run: lighter

- Manifest for: Lighter Deployment

apiVersion: apps/v1

kind: Deployment

metadata:

namespace: default

name: lighter

spec:

selector:

matchLabels:

run: lighter

replicas: 1

strategy:

rollingUpdate:

maxUnavailable: 0

maxSurge: 1

template:

metadata:

labels:

run: lighter

spec:

containers:

- image: ghcr.io/exacaster/lighter:0.0.3-spark3.1.2

name: lighter

readinessProbe:

httpGet:

path: /health/readiness

port: 8080

initialDelaySeconds: 15

periodSeconds: 15

resources:

requests:

cpu: "0.25"

memory: "512Mi"

ports:

- containerPort: 8080

env:

- name: LIGHTER_STORAGE_JDBC_USERNAME

value: postgres

- name: LIGHTER_STORAGE_JDBC_PASSWORD

value: secretpassword

- name: LIGHTER_STORAGE_JDBC_URL

value: jdbc:postgresql://postgres-postgresql:5432/lighter

- name: LIGHTER_STORAGE_JDBC_DRIVER_CLASS_NAME

value: org.postgresql.Driver

- name: LIGHTER_SPARK_HISTORY_SERVER_URL

value: http://spark-master-svc:7077/spark-history

- name: LIGHTER_MAX_RUNNING_JOBS

value: "15"

- name: LIGHTER_KUBERNETES_CONTAINER_IMAGE

value: "ghcr.io/exacaster/spark:latest"

serviceAccountName: spark

I did not bother with the ingress portion because I am just testing and use port-forwarding to see the Spark and Lighter UIs.

I am sending a POST request to Lighter's Batch API with the following:

{

"name": "Test",

"file": "/data/spark-examples.jar",

"args": ["/data/test.txt"],

"files": ["/data/test.txt"],

"className" : "org.apache.spark.examples.JavaWordCount"

}

After the request gets accepted, other fields get automatically filled in and the UI reflects the submission. However, the state of the job is always "Starting" (also I can't delete it if I press the "X" button).

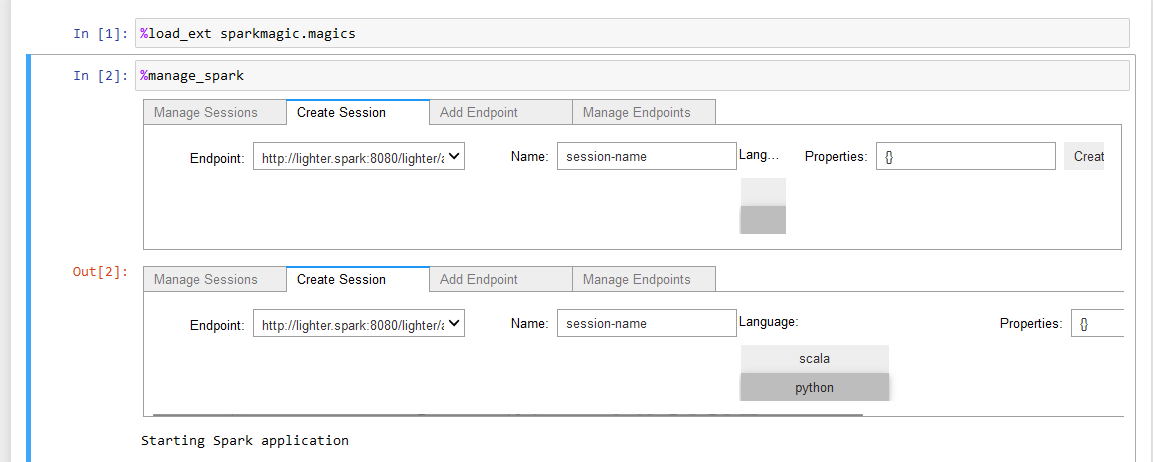

I'm not sure what is going on. I tried checking the pod's logs but there is nothing related. I was wondering if you could point me in the right direction to figure out what is missing/misconfigured for it to work properly. I'm attaching a screenshot of the UI.

Thank you for your time!